Less AI Posturing, More Proof of Work

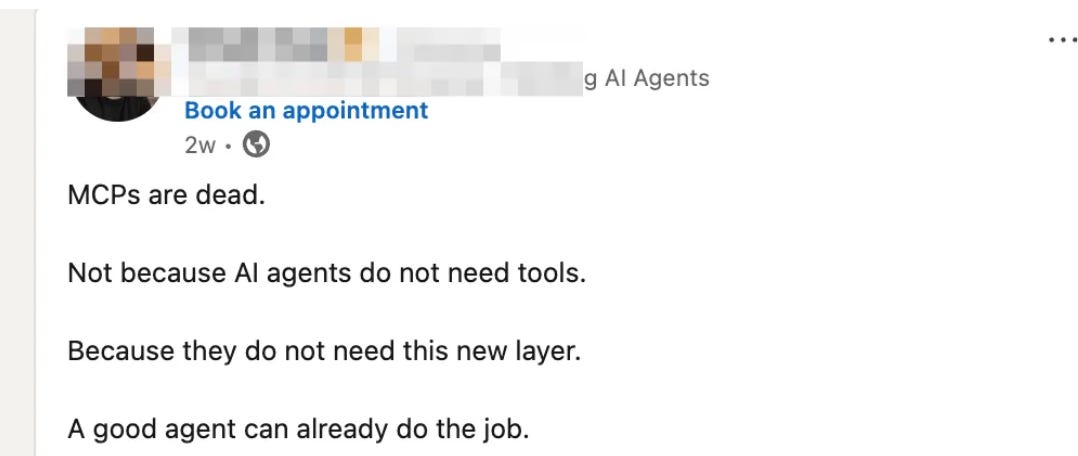

This post showed up in my feed last week. No attribution needed - you’ve seen a hundred like it:

…

Maybe that’s right. Maybe it’s wrong. I genuinely don’t know, but I do know that the person who wrote it does not, because there’s no evidence attached. No app. No production system. Just the confident rhythm of a LinkedIn post optimized for reshares.

Most AI discourse right now falls into the same traps.

The hot take

Bold claim, short lines, confident conclusion. “MCP is dead.” “OpenClaw is taking over.” “Cursor is beating Claude.” The posts rack up thousands of impressions and age out of relevance within 90 days, at which point the same account posts the opposite take with equal conviction. No timestamps, no cost numbers. Just vibes dressed up as arguments.

The enterprise crawl

Meanwhile, most enterprises are nowhere near the hype.

Hundreds of employees get involved. A steering committee forms. A vendor gets selected. Six months later there’s a governance framework, a hundred internal architecture docs, and a press release about autonomous software that nobody had yet shipped. Eighteen months later: a demo environment and a bunch of Services agreements, but no product.

The people making AI investment decisions are rarely the people who will live inside the outputs. The result is a lot of license spending and very little shipped software.

Cursor is the clearest illustration: buy 100 seats, tell the engineering org to “use AI,” and wait for productivity to compound.

But then, in practice, experienced developers stop shipping code. They spend more time in meetings.

Because at the end of the day, the tool was never the outcome.

Cursor or no Cursor, someone still has to define the work.

What proof of work actually looks like

Not a post. Not a thread. An app someone can open. One that someone other than you actually tried, got confused by, or got what they needed from.

A cost that shows up on a real invoice. A workflow that breaks at step three and tells you something true about your assumptions.

What counts is not the take. It’s whether you can turn a workflow that lives in three spreadsheets and someone’s memory into something real enough that another person can try it, break it, and tell you where you misunderstood the problem.

Recall that “MCP is dead” claim above. Well, here is our newest MCP powered apps, which you can try in under 60 seconds and decide for yourself:

We shipped the above MCP-powered product not because it defines our roadmap, but because we genuinely thought others would find it valuable and it will teach us something concrete that another month of abstract AI opinions never will.

The demo compresses what normally takes founders days into minutes - from identifying investors to getting a meeting via a warm network introduction.

Real AI breakthrough in ways even I myself didn’t anticipate.

And if you watched the above and thought “MCP is dead” - I have the receipts (i.e. users signing up daily).

The Cursor call, revisited

A few months ago I wrote about Cursor and made what felt like a contrarian read: that the 2.0 changes were survival tactics, that the margin pressure was existential, and that the co-founder departure likely signaled real disagreements about product direction.

Two things have since happened that prove it right.

Cursor shipped Composer 2, their own proprietary coding model built on top of open-source Kimi K2.5, and they did not frame it as a cost-cutting move. But the numbers make the logic clear. An internal Cursor analysis reported by The Decoder found that Anthropic was effectively burning thousands of dollars in compute per user per month while charging $200. You cannot make the unit economics work when your main supplier is subsidizing your customers at that ratio. At those economics, building your own model stops being a product bet and becomes a basic condition of survival.

The enterprise disappointment is getting documented too. The NYT recently ran a piece on “code overload,” the phenomenon where AI-assisted codebases generate more volume than engineering teams can responsibly review and maintain. 100 Cursor licences doesn’t produce 100x more shipped product. It produces a lot more code that someone still has to understand, own, and defend.

The original thesis, that the tool is the outcome, was always wrong. The enterprises buying in bulk without a plan for what “done” looks like are finding that out now.

What we’re doing about it

We’re going to show our work in public. Sometimes that will be a full product. Sometimes a narrower AI app feature shipped fast. Fewer opinions. More receipts.

We’re not a custom dev shop. We’re looking for recurring problems that are common and painful enough that people are ready to pay someone to solve.

If you keep seeing something breaking despite all the AI noise, tell us what it is: take the survey. If you’re frustrated about an email client, or CRM experience, we want to know. If you think your daily web experience is broken, tell us the specifics.

If it sounds like something worth building, we’ll talk.

The takes are free. The receipts cost something.

- SG@KLABS

We are just starting out…